Since this has been shown to be effective in previous research, we will be applying a Short-Term Fourier Transform on our input signals in order to generate their corresponding spectrograms.Īnother important piece of prior work that we will be drawing on is “Improving music source separation based on deep neural networks through data augmentation and network blending”, which was a previous attempt at solving this problem using feed-forward and recurrent neural networks. Getting the spectrogram of a music sample is quite important as it allows us to expose the structure of human speech from a sample and also use the CNN model architecture described above. The overall problem is technically a regression problem, as our model needs to be able to predict a continuous sequence of audio that is strictly composed of the human vocals.Īn important piece of prior work that we will be drawing on to do our project has to do with preprocessing, specifically how to transform the time-domain signal of a music sample into a spectrogram. This is a subproblem of this project, namely training our model to be able to classify whether a given section of audio contains human vocals. By converting songs to their representations as spectrograms, a CNN can learn spatial patterns in these spectrograms and use this to identify the components that make up human vocals, a form of classification.

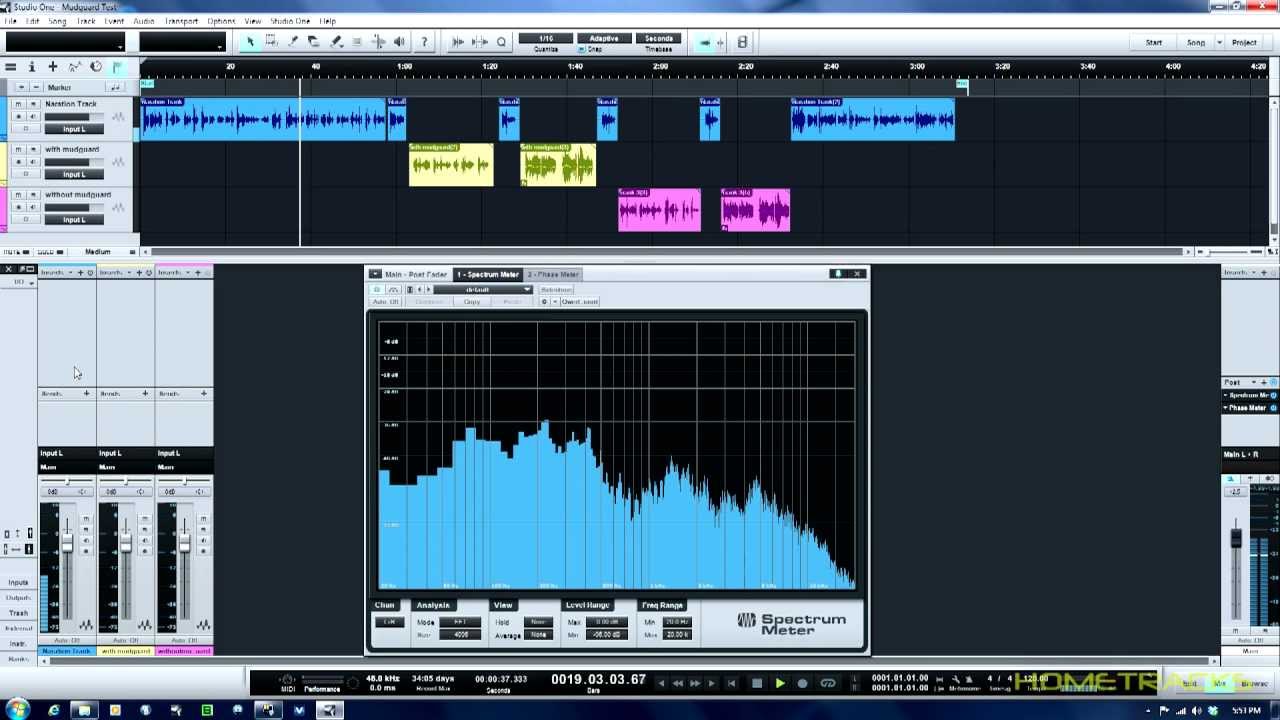

This is possible through the use of spectrograms, a visual representation of the spectrum of frequencies of an audio signal as it varies with time. However, the paper we are implementing is quite interesting as it uses a convolutional neural network (something traditionally associated with analyzing visual imagery) to extract vocal samples. Many researchers have tried to solve this problem using recurrent neural networks in order to capture temporal changes in a song. We chose this paper as it took a novel approach to this vocal extraction problem. The objective of this paper was to create a neural network that can extract a singing voice from its musical accompaniment. The paper that we will be implementing is “Singing Voice Separation Using a Deep Convolutional Neural Network Trained by Ideal Binary Mask and Cross Entropy”. Also, listening to accurate isolated stems can be a fascinating educational tool for performers, producers, and mixers, as well as for music appreciators in general! Taking popular vocals and overlaying them on an original accompaniment or beat is a very common way of making music (especially in genres such as lofi and rap), so having a way of effectively extracting vocals from songs would be very helpful for many artists. Artists can use this model to extract the vocals from a song and then creatively sample or alter this snippet to create their own original music. The reason we are doing this is because the extraction of specific components from a song, such as vocals, can be used by artists to create other music. The goal of our final project is to create a model that, given a song or recording, can isolate, extract, and output the vocals of the song with as little interference from background noise as possible.

While humans are quite adept at separating different sounds from a musical signal (vocals, individual instruments, etc.), computers have a much harder time doing so effectively. Names and logins of all your group members.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed